Difference between revisions of "Data decompression chain"

(→3D modeling: heavily extended on the ==3D modelling== section and a bit on the ==Targets== section) |

m (→The decompression chain in gem-gum factories (and 3D printers)) |

||

| Line 39: | Line 39: | ||

{{todo|add details to decompression chain points}} | {{todo|add details to decompression chain points}} | ||

| − | === Targets === | + | === (Compilation) Targets === |

Beside the actual physical product another desired product of the code is just a digital preview. <br> | Beside the actual physical product another desired product of the code is just a digital preview. <br> | ||

Revision as of 19:45, 19 November 2021

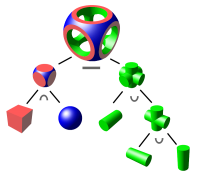

The "data decompression chain" is the sequence of expansion steps from

- very compact highest level abstract blueprints of technical systems to

- discrete and simple lowest level instances that are much larger in size.

Contents

3D modeling

Programmatic high level 3D modelling representations with code can

considered to be a highly compressed data representation of the target product.

The principle rule of programming which is: "don't repeat yourself" does apply.

- Multiply occurring objects (including e.g. rigid body crystolecule parts) are specified only once plus the locations and orientations (poses) of their occurrences.

- Curves are specified in a not yet discretized (e.g. not yet triangulated) way. See: Non-destructive modelling

- Complex (and perhaps even dynamic) assemblies are also encoded such that they complexly unfold on code execution.

Laying out gemstone based metamaterials in complex dynamically interdigitating/interlinking/interweaving ways.

Note: "Programmatic" does not necessarily mean purely textual and in: "good old classical text editors".

Structural editors might and (as to the believe of the author) eventually will take over

allowing for an optimal mixing of textual and graphical programmatic representation of target products in the "integrated deveuser interfaces".

The decompression chain in gem-gum factories (and 3D printers)

The list goes:

- from top high level small data footprint

- to bottom low level large data footprint

- high language 1: functional, logical, connection to computer algebra system

- high language 2: imperative, functional

- Volume based modeling with "level set method" or even "signed distance fields"

(organized in CSG graphs which reduce to the three operations: sign-flip, sum and maximum) - Surface based modeling with parametric surfaces (organized in CSG graphs)

- quadric nets C1 (rarely employed today 2017)

- triangle nets C0

- tool-paths

- Primitive signals: step-signals, rail-switch-states, clutch-states, ...

(TODO: add details to decompression chain points)

(Compilation) Targets

Beside the actual physical product another desired product of the code is just a digital preview.

So there are several desired outputs for one and the same code.

Maybe useful for compiling the same code to different targets (as present in this context): Compiling to categories (Conal Elliott)

Possible desired outputs include but are not limited to:

- actual physical target product object

- virtual simulation of the potential product (2D or some 3D format)

- approximation of output in form of utility fog?

3D modeling & functional programming

Modeling of static 3D models is purely declarative.

- example: OpenSCAD

...

Similar situations in today's computer architectures

- high level language ->

- compiler infrastructure (e.g. llvm) ->

- assembler language ->

- actual actions of the target data processing machine

Bootstrapping of the decompression chain

One of the concerns regarding the feasibility of advanced productive nanosystems is the worry that that all the necessary data cannot be fed to

- the mechanosynthesis cores and

- the crystolecule assembly robotics

The former are mostly hard coded and don't need much data by the way.

For example this size comparison in E. Drexlers TEDx talk (2015) 13:35 can (if taken to literally)

lead to the misjudgment that there is an fundamentally insurmountable data bottleneck.

Of course trying to feed yotabits per second over those few pins would be ridiculous and impossible, but that is not what is planned.

(wiki-TODO: move this topic to Data IO bottleneck)

We already know how to avoid such a bottleneck.

Albeit we program computers with our fingers delivering just a few bits per second

computers now perform petabit per second internally.

The goal is reachable by gradually building up a hierarchy of decompression steps.

The most low level most high volume data is generated internally and locally very near to where it's finally "consumed".

Related

- Control hierarchy

- mergement of GUI-IDE & code-IDE

- The reverse: while decompressing is a technique compressing is an art - (a vague analog to derivation and integration)

See: the source of new axioms Warning! you are moving into more speculative areas. - Why identical copying is unnecessary for foodsynthesis and Synthesis of food

In the case of synthesis of food the vastly different decompression chain between biological systems and advanced diamondoid nanofactories leads to the situation that nanofactories cannot synthesize exact copies of food down to the placement of every atom. See Food structure irrelevancy gap for a viable alternative. - constructive corecursion

- Data IO bottleneck

- Compiling to categories (Conal Elliott)

External Links

Wikipedia

- Solid_modeling, Computer_graphics_(computer_science)

- Constructive_solid_geometry

- Related to volume based modeling: Quadrics (in context of mathematical shapes) (in context of surface differential geometry)

- Related to volume based modeling: Level-set_method Signed_distance_function

- Avoidable in steps after volume based modeling: Triangulation_(geometry), Surface_triangulation